Tapping Twitter data for in-depth analysis of public opinion

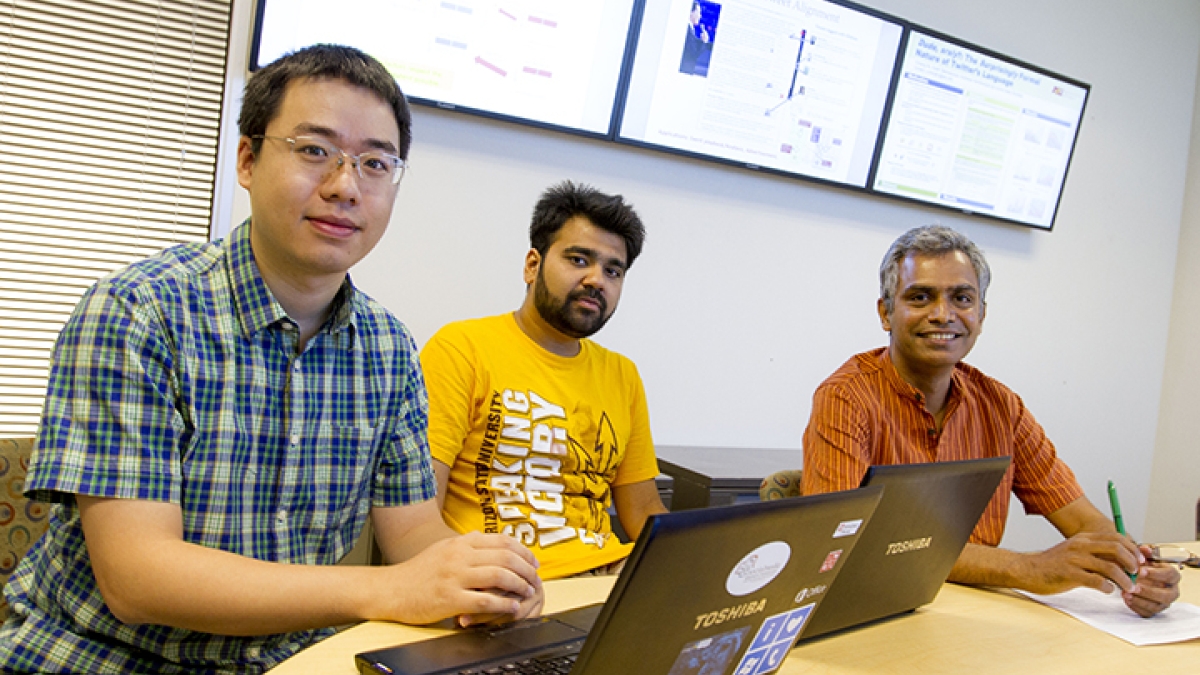

Social scientists and others seeking insight into public opinion and behavior might benefit from Twitter-based research being conducted by two Arizona State University computer science doctoral students.

Yuheng Hu and Kartik Talamadupula are developing computer models for analytical systems that can harness massive amounts of data generated by Twitter and organize it in a rapid, reliable and efficient fashion.

Such a capability can help provide a sound basis for advanced statistical analysis of public opinion that develops in reaction to various events or to the emergence of social and political issues and controversies.

Hu and Talamadupula are doing the research under the supervision of Subbarao Kambhampati, a professor in the School of Computer, Informatics, and Decision Systems Engineering, one of ASU’s Ira A. Fulton Schools of Engineering.

Kambhampati says the students’ research demonstrates how computer science can provide more sound information to help political scientists, social psychologists, linguists, journalists and others in similar fields gain more certainty in exploring societal attitudes and trends.

Gauging public reaction

Hu has created a computer model that performs a process he calls “sentiment analysis,” using data from Twitter messages to determine public reaction to various events. He tested the model during the presidential candidates’ debates before the 2012 national elections.

Hu’s system enabled him, for instance, to quickly determine whether there was a more positive or negative public reaction on Twitter to various comments made by the candidates.

Closely watched events such as political debates and campaign speeches generate thousands of tweets that indicate how the public feels about various subjects. But usually an analysis of Twitter messages is done manually, which provides only a limited sample of tweets with which to determine trends in public opinion.

Analysts “might be able to manually sort through 300 tweets to study language or analyze public sentiment, but they lose out on a lot of information and the process is inefficient,” Kambhampati explains.

Hu’s model can quickly segment and align tweets according to the content of the messages and what they express about events and issues, thus providing a more accurate gauge of public attitudes.

Twitter-based linguistics

Hu teamed up with Talamadupula for a second project to conduct a computational linguistic analysis of the language used on Twitter. They were curious about whether the language of Twitter most resembles the way people communicate in text messages, e-mails or in the more formal language of magazines.

“All forms of social media and written publications have their own linguistic expectation,” Kambhampati says. Text messages contain language-shortening techniques, abbreviations and slang more frequently than e-mails, for instance.

“Linguists have been debating about the implications of the language of Twitter for some time, but Hu’s and Talamadupula’s research brings a needed large-scale analysis to the discussion,” he says.

Hu and Talamadupula conducted a computational linguistic analysis that took a “snapshot” from a portion of the Twitter fire hose from June to August in 2011. In this snapshot of thousands of tweets they found that the language tends to resemble e-mail and magazine language more than the language used in text messages.

Hu said they found Twitter language “surprisingly formal,” revealing that people resist word shortening and slang despite having to limit tweets to 140 characters.

New social science tool

Kambhampati says both projects exemplify the emergence of “computational social science,” a powerful tool for those in social science fields to more accurately analyze large amounts of data and provide a more solid basis for identifying or predicting trends in societal attitudes and behavior.

“Human behavior is dynamic and often hard to understand, this research has been a great opportunity to know people better through social media,” Hu says.

Kambhampati says the analysis of social media – something that is used millions of times each day – gives researchers a more vast trove of data than standard polls and surveys from which to derive empirical evidence of public sentiment.

The kind of large-scale data offered by the computational methods Hu and Talamadupula are developing “is just the beginning” of advanced analytics that can have far-reaching impacts on sociological research, Kambhampati says.

Hu and Talamadupula presented their research at the International Conference of Weblogs and Social Media in Boston in July and Kambhampati made a presentation at the International Joint Conference on Artificial Intelligence in August in Beijing, China.

Emerging research field

In addition, Kambhampati recently received a $55,000 Google Research Award to support work related to the Twitter Alignment project. It is Kambhampati’s third Google Research Award.

He says the awards keep coming because Google respects the work ASU researchers are doing in this area and the company has an interest in the emerging field of “computational journalism” tied to Twitter.

“Reporting on Twitter and analyzing what is said are new and emerging areas of journalism,” says Kambhampati. “Our research clearly relates to that emerging field.”

Kambhampati plans to use the Google grant to expand research in “ways that we haven’t even fully realized yet.”

In addition to presenting the research at conferences, Hu spent his summer doing a Microsoft Research Internship in Washington state. He performed similar research focused on using Twitter to predict users engagement in local events.

In collaboration with Srijith Ravikumar, who is pursuing a master’s degree in computer science at ASU, Talamadupula began research on new algorithms to rank Twitter search results according to the interests of specific users. He reported on the research at the Annual Association for the Advancement of Artificial Intelligence (AAAI) conference in Washington state in July.

Their paper has also been accepted for presentation at the Association for Computing Machinery (ACM) International Conference on Information and Knowledge Management conference in San Francisco in October.

Written by Rosie Gochnour and Joe Kullman